生产环境中为了保障业务的稳定性,集群都需要高可用部署,k8s 中 apiserver 是无状态的,可以横向扩容保证其高可用,kube-controller-manager 和 kube-scheduler 两个组件通过 leader 选举保障高可用,即正常情况下 kube-scheduler 或 kube-manager-controller 组件的多个副本只有一个是处于业务逻辑运行状态,其它副本则不断的尝试去获取锁,去竞争 leader,直到自己成为leader。如果正在运行的 leader 因某种原因导致当前进程退出,或者锁丢失,则由其它副本去竞争新的 leader,获取 leader 继而执行业务逻辑。

kubernetes 版本: v1.12

组件高可用的使用

k8s 中已经为 kube-controller-manager、kube-scheduler 组件实现了高可用,只需在每个组件的配置文件中添加 --leader-elect=true 参数即可启用。在每个组件的日志中可以看到 HA 相关参数的默认值:

1 | I0306 19:17:14.109511 161798 flags.go:33] FLAG: --leader-elect="true" |

kubernetes 中查看组件 leader 的方法:

1 | $ kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml && |

当前组件 leader 的 hostname 会写在 annotation 的 control-plane.alpha.kubernetes.io/leader 字段里。

Leader Election 的实现

Leader Election 的过程本质上是一个竞争分布式锁的过程。在 Kubernetes 中,这个分布式锁是以创建 Endpoint 资源的形式进行,谁先创建了该资源,谁就先获得锁,之后会对该资源不断更新以保持锁的拥有权。

下面开始讲述 kube-controller-manager 中 leader 的竞争过程,cm 在加载及配置完参数后就开始执行 run 方法了。代码在 k8s.io/kubernetes/cmd/kube-controller-manager/app/controllermanager.go 中:

1 | // Run runs the KubeControllerManagerOptions. This should never exit. |

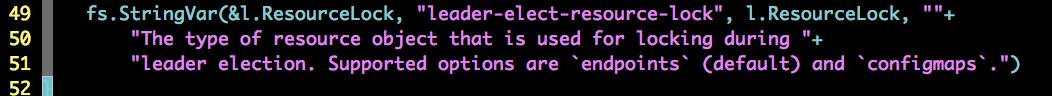

- 1、初始化资源锁,kubernetes 中默认的资源锁使用

endpoints,也就是 c.ComponentConfig.Generic.LeaderElection.ResourceLock 的值为 “endpoints”,在代码中我并没有找到对 ResourceLock 初始化的地方,只看到了对该参数的说明以及日志中配置的默认值:

在初始化资源锁的时候还传入了 EventRecorder,其作用是当 leader 发生变化的时候会将对应的 events 发送到 apiserver。

2、rl 资源锁被用于 controller-manager 进行 leader 的选举,RunOrDie 方法中就是 leader 的选举过程了。

3、Callbacks 中定义了在切换状态后需要执行的操作,当成为 leader 后会执行 OnStartedLeading 中的 run 方法,run 方法是 controller-manager 的核心,run 方法中会初始化并启动所包含资源的 controller,以下是 kube-controller-manager 中所有的 controller:

1 | func NewControllerInitializers(loopMode ControllerLoopMode) map[string]InitFunc { |

OnStoppedLeading 是从 leader 状态切换为 slave 要执行的操作,此方法仅打印了一条日志。

1 | func RunOrDie(ctx context.Context, lec LeaderElectionConfig) { |

在 RunOrDie 中首先调用 NewLeaderElector 初始化了一个 LeaderElector 对象,然后执行 LeaderElector 的 run 方法进行选举。

1 | func (le *LeaderElector) Run(ctx context.Context) { |

Run 中首先会执行 acquire 尝试获取锁,获取到锁之后会回调 OnStartedLeading 启动所需要的 controller,然后会执行 renew 方法定期更新锁,保持 leader 的状态。

1 | func (le *LeaderElector) acquire(ctx context.Context) bool { |

在 acquire 中首先初始化了一个 ctx,通过 wait.JitterUntil 周期性的去调用 le.tryAcquireOrRenew 方法来获取资源锁,直到获取为止。如果获取不到锁,则会以 RetryPeriod 为间隔不断尝试。如果获取到锁,就会关闭 ctx 通知 wait.JitterUntil 停止尝试,tryAcquireOrRenew 是最核心的方法。

1 | func (le *LeaderElector) tryAcquireOrRenew() bool { |

上面的这个函数的主要逻辑:

- 1、获取 ElectionRecord 记录,如果没有则创建一条新的 ElectionRecord 记录,创建成功则表示获取到锁并成为 leader 了。

- 2、当获取到资源锁后开始检查其中的信息,比较当前 id 是不是 leader 以及资源锁有没有过期,如果资源锁没有过期且当前 id 不是 Leader,则直接返回。

- 3、如果当前 id 是 Leader,将对应字段的时间改成当前时间,更新资源锁进行续租。

- 4、如果当前 id 不是 Leader 但是资源锁已经过期了,则抢夺资源锁,抢夺成功则成为 leader 否则返回。

最后是 renew 方法:

1 | func (le *LeaderElector) renew(ctx context.Context) { |

获取到锁之后定期进行更新,renew 只有在获取锁之后才会调用,它会通过持续更新资源锁的数据,来确保继续持有已获得的锁,保持自己的 leader 状态。

Leader Election 功能的使用

以下是一个 demo,使用 k8s 中 k8s.io/client-go/tools/leaderelection 进行一个演示:

1 | package main |

分别使用多个 hostname 同时运行后并测试 leader 切换,可以在 events 中看到 leader 切换的记录:

1 | # kubectl describe endpoints test -n kube-system |

总结

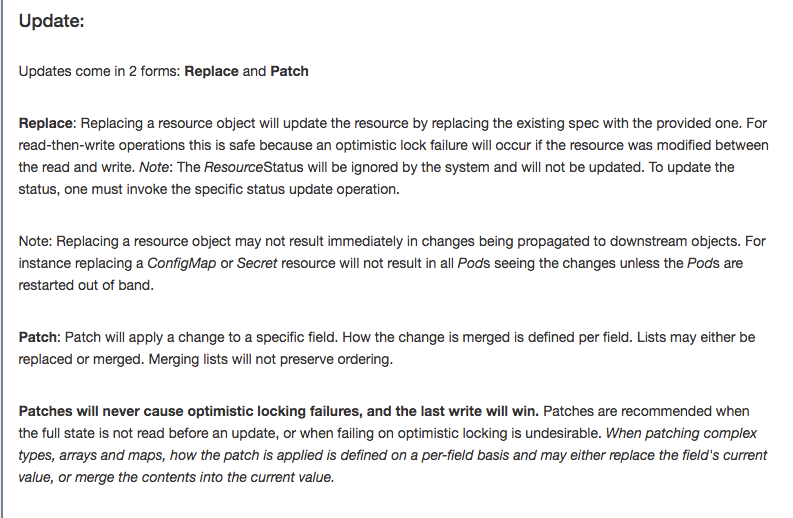

本文讲述了 kube-controller-manager 使用 HA 的方式启动后 leader 选举过程的实现说明,k8s 中通过创建 endpoints 资源以及对该资源的持续更新来实现资源锁轮转的过程。但是相对于其他分布式锁的实现,普遍是直接基于现有的中间件实现,比如 redis、zookeeper、etcd 等,其所有对锁的操作都是原子性的,那 k8s 选举过程中的原子操作是如何实现的?k8s 中的原子操作最终也是通过 etcd 实现的,其在做 update 更新锁的操作时采用的是乐观锁,通过对比 resourceVersion 实现的,详细的实现下节再讲。

参考文档:

API OVERVIEW

Simple leader election with Kubernetes and Docker